Deep Dive into the Cursor Kimi Model: Elevating AI Code Editors with a 2M Token Context Window

Discover the power of the Cursor Kimi Model, an advanced AI code editor integration featuring a massive context window for repository-wide code analysis and debugging.

iReadCustomer Team

Author

The Evolution of the AI Code Editor

When discussing an AI code editor, most developers immediately think of GitHub Copilot or using ChatGPT in a browser alongside a traditional IDE. However, Cursor disrupted this paradigm by engineering an AI-first IDE—a sophisticated fork of VS Code that perfectly preserves users' extensions and keybindings while injecting deep AI understanding into the editor's core.

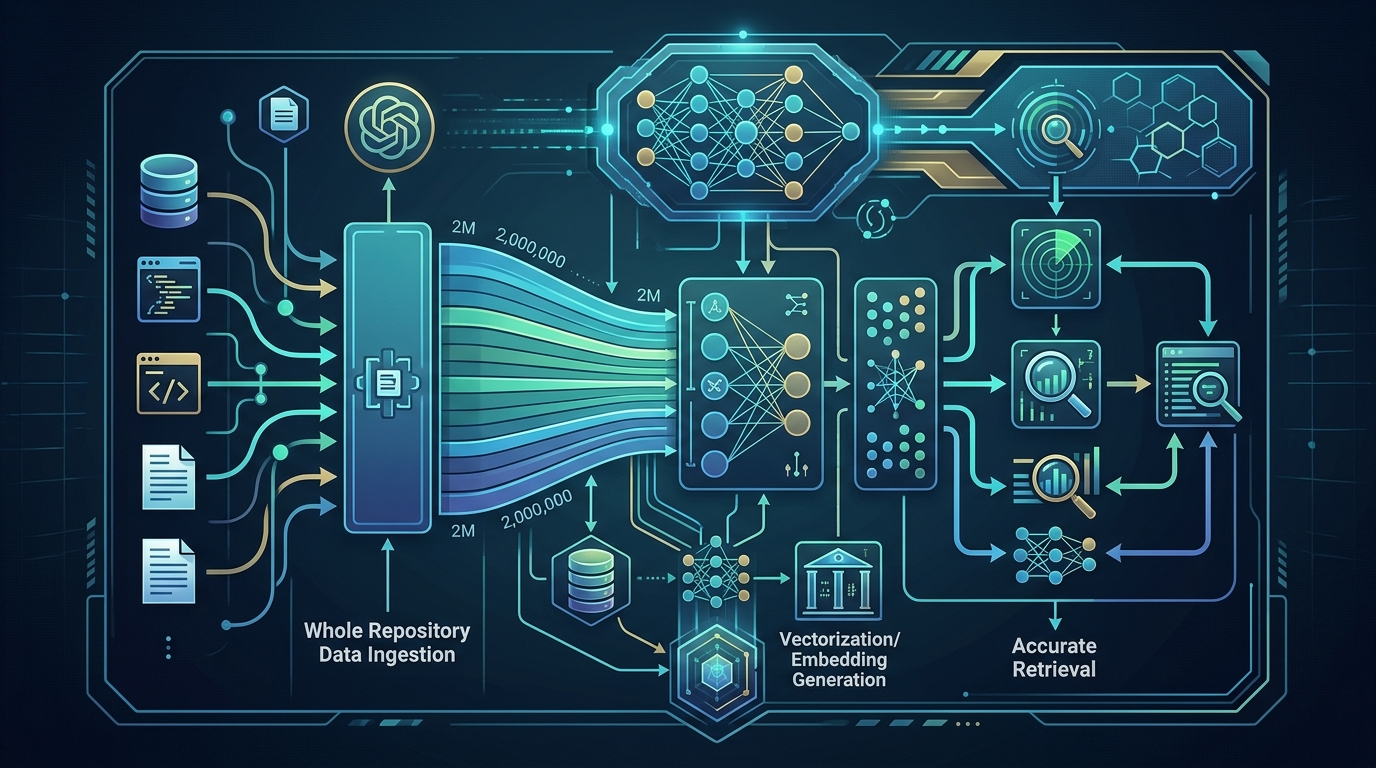

The integration of the Moonshot AI Kimi model—developed by the highly acclaimed startup Moonshot AI—into Cursor represents a monumental leap forward. Kimi is globally recognized for its ability to ingest and synthesize colossal amounts of text and documentation. This synergy creates the Cursor Kimi Model experience, enabling developers to feed their entire repository, massive system logs, and extensive API documentation into a single prompt without risking information degradation.

Unpacking the Long Context Window of Moonshot AI Kimi

Historically, the Achilles' heel of Large Language Models (LLMs) in software development has been the context limit. When the input exceeds a certain threshold, models suffer from hallucination or the "forgetting" of earlier constraints. Moonshot AI Kimi was specifically architected to obliterate this bottleneck.

Featuring an astronomical long context window of up to 2 million tokens (equivalent to thousands of pages of text or hundreds of thousands of lines of code), the model operates on an entirely different scale:

The "Needle in a Haystack" Retrieval Accuracy

Kimi has been rigorously evaluated using the "Needle in a Haystack" benchmark, which tests an AI's ability to extract an isolated piece of information buried deep within an enormous dataset. For programmers, this means the model can perfectly identify a minor configuration bug buried in a massive, interconnected microservices architecture without losing the overarching context.

True Whole-Codebase Processing

When modernizing applications or executing legacy code refactoring, engineers can task the Cursor Kimi Model with reading the entire data structure and application logic upfront. The model can then formulate comprehensive refactoring strategies, drastically reducing the risk of unforeseen side effects in dependent modules.

How the Cursor Kimi Model Transforms Developer Productivity Tools

Within the realm of developer productivity tools, speed, and syntactical accuracy are paramount. Integrating Kimi into Cursor introduces profound technical capabilities:

- @Codebase Contextual Chat: One of the most powerful features in Cursor is the

@Codebasecommand (accessed via Cmd+L/Ctrl+L). Kimi utilizes its long context capabilities to instantly scan, vector-match, and map relationships between variables, classes, and functions across the entire project before writing a single line of code or answering an architectural query. - Smart Debugging via Massive Error Logs: When a production application crashes, dumping a 20,000-line stack trace into a standard AI model usually results in failure. Kimi, however, can digest these extensive logs, compare them against the current codebase state, and precisely pinpoint memory leaks or race conditions.

- Automated End-to-End Documentation: The model can ingest the entire source code to automatically generate highly accurate technical specifications, OpenAPI/Swagger documentation, and README files that genuinely reflect the system's runtime mechanics.

Technical Benchmarks and Enterprise Capabilities

For enterprises and SMBs, particularly those operating in multilingual environments like Thailand, choosing enterprise-grade technology requires careful consideration of language support, latency, and architectural costs. The Cursor Kimi Model excels in these criteria:

- Superior Bilingual Processing: A common challenge in Southeast Asian tech hubs is dealing with codebases that contain a mix of English code and local language (e.g., Thai) comments or product requirements. Moonshot AI Kimi exhibits excellent proficiency in Asian languages, allowing it to seamlessly bridge the gap between English logic and Thai contextual requirements. natural language processing in tech

- Optimal Token Economics: Processing vast amounts of context can be prohibitively expensive with top-tier western models. Kimi offers a highly competitive compute-to-performance ratio, enabling development teams to scale their AI usage for large codebases without burning through their operational budgets.

- Accelerated Developer Onboarding: Explaining a monolithic system's architecture to new engineers can take weeks. With this tool, a new hire can query the codebase deeply, understand complex data flows, and begin shipping production-ready features from week one.

Conclusion: Scaling Engineering with the Cursor Kimi Model

The exponential growth of the AI code editor is permanently redefining software engineering workflows. By harnessing the capabilities of the Cursor Kimi Model and its unprecedented long context window, engineering teams no longer need to waste countless hours manually tracing execution paths or deciphering undocumented legacy systems. This integration stands as one of the most advanced developer productivity tools available today, empowering businesses to build resilient software, ship products faster, and adapt dynamically to the digital market's demands.

Frequently Asked Questions (FAQ)

How does the Cursor Kimi Model differ from GitHub Copilot?

While Copilot acts as an intelligent autocomplete within traditional IDEs, the Cursor Kimi Model operates within an AI-first editor environment. Combined with Kimi's 2-million token context window, it can understand and manipulate your entire repository at once, whereas traditional models often only analyze the immediate files or surrounding lines.

Is enterprise code secure when using Moonshot AI Kimi via Cursor?

Cursor provides a "Privacy Mode" designed for enterprise use, ensuring that your proprietary codebase is not used to train their models. However, organizations with strict compliance requirements should thoroughly review the Data Processing Agreements (DPAs) of both Cursor and Moonshot AI before deploying it for highly sensitive projects.

Can Kimi process non-English requirement documents alongside source code?

Yes. Kimi's robust multi-language capabilities and massive context limit allow developers to input comprehensive Product Requirement Documents (PRDs) in languages like Thai alongside their existing codebase. The model can cross-reference the native language requirements and generate accurate, English-based code structures seamlessly.