Fixing AI Data Infrastructure: Transforming Unstructured Multi-Cloud Silos

Discover how Thai enterprises can scale their machine learning models by modernizing their AI data infrastructure, mastering unstructured data management, and reducing multi-cloud friction.

iReadCustomer Team

Author

Why Traditional AI Data Infrastructure Fails Thai Enterprises

During the traditional Business Intelligence (BI) era, data architecture was optimized for structured datasets—SQL sales records, CRM entries, and standardized ERP logs. These legacy systems were designed to power human-readable dashboards and retrospective reporting.

Generative AI operates on an entirely different paradigm. Large Language Models (LLMs) crave context, which is heavily buried in corporate PDFs, call center transcripts, emails, and massive volumes of LINE Official Account chat histories. While Thai companies possess terabytes of this valuable information, it currently rots in disorganized "data swamps."

Data science teams often find themselves spending 80% of their time just hunting down and cleaning data. assessing enterprise AI readiness This glaring inefficiency proves that legacy storage solutions were never designed to support Thai enterprise AI scaling at the speed and precision required today.

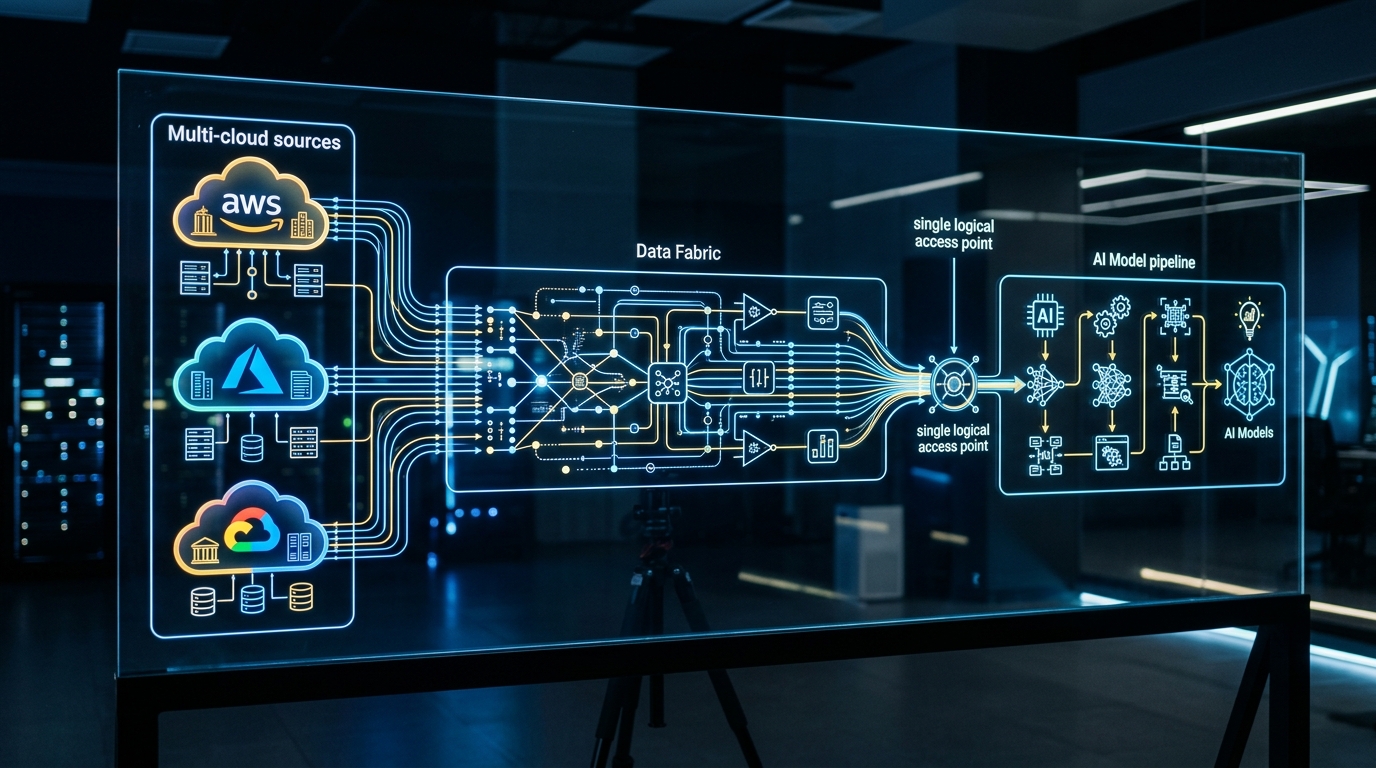

Overcoming Multi-Cloud Complexity in Southeast Asia

Multi-cloud complexity remains a massive operational hurdle. Large Thai enterprises—particularly in the banking and telecommunications sectors—frequently operate in an "accidental multi-cloud" environment. IT departments utilize Microsoft Azure for active directories and enterprise apps, Data teams leverage Google Cloud Platform (GCP) for BigQuery analytics, while AI researchers prefer Amazon Web Services (AWS) for SageMaker.

When these environments remain siloed, migrating terabytes of data across cloud boundaries to feed an AI model is not only painfully slow but also incurs exorbitant egress fees.

Standardizing the Data Layer with Data Fabric

The solution lies in adopting a Data Fabric or Data Mesh architecture. Rather than physically copying data from AWS to GCP, a Data Fabric creates a logical virtualization layer. It allows data scientists to query information across multiple clouds or legacy on-premise mainframes through a single, unified control plane. This dramatically reduces multi-cloud complexity and ensures tight data governance, aligning with Thailand's PDPA regulations.

Mastering Unstructured Data Management for RAG Pipelines

If you want an internal AI to answer nuanced questions about company policies or technical manuals, you must master unstructured data management. This is where Retrieval-Augmented Generation (RAG) pipelines shine.

The unique challenge in the Thai market involves the complexity of the Thai language itself—lack of spaces between words (requiring advanced tokenization) and heavy code-mixing (Thai and English used interchangeably). Transforming Thai customer service voice logs or PDF manuals into AI-readable formats requires a rigorous, modernized data pipeline:

- Ingestion & Parsing: Extracting text from disparate sources (e.g., using Thai-optimized OCR for scanned corporate documents).

- Chunking: Splitting large texts into semantically meaningful chunks so context isn’t lost across token boundaries.

- Embedding: Converting text into high-dimensional vector embeddings using models specifically trained to understand Thai semantics.

- Vector Store: Indexing these vectors into a scalable Vector Database for ultra-fast, real-time similarity search.

This workflow is the backbone of proper unstructured data management, enabling LLMs to fetch highly relevant facts and drastically reduce AI hallucinations. optimizing Thai NLP models

Cost-Efficient Data Transformation Strategies

Running AI pipelines at an enterprise scale burns through capital fast. Therefore, implementing cost-efficient data transformation strategies is a non-negotiable requirement. Enterprises must adopt FinOps (Financial Operations) mindsets tailored for data engineering.

The Medallion Architecture (Bronze, Silver, Gold)

One proven method for cost-efficient data transformation is the Medallion architecture:

- Bronze Layer: Stores raw, unvalidated data precisely as it was ingested (kept in ultra-cheap object storage).

- Silver Layer: Data is cleansed, filtered, and stripped of Personally Identifiable Information (PII).

- Gold Layer: Highly refined, context-dense data that is instantly ready for LLM consumption.

Instead of feeding raw, messy data directly into an LLM API (which wastes thousands of dollars on useless API tokens), pre-processing data strictly up to the Gold Layer saves massive computational budgets. Additionally, scheduling heavy batch transformations during cloud off-peak hours significantly cuts compute costs.

Building a Future-Proof AI Data Infrastructure

The road to AI dominance doesn’t begin with clever prompt engineering or buying the most expensive foundational models. It starts deep in the server room, by architecting a resilient AI data infrastructure. For Thai businesses looking to pull ahead of the competition, resolving multi-cloud complexity, wrangling unstructured data management, and rigorously enforcing cost-efficient data transformation are the true keys to unlocking sustainable Thai enterprise AI scaling. implementing data governance for AI

Frequently Asked Questions (FAQ)

How does a Data Fabric differ from a Data Lake? A Data Lake is a centralized repository where all raw data is physically dumped and stored. A Data Fabric, however, is a virtualized architectural layer that connects disparate databases (across multi-cloud or on-premise) logically, allowing seamless access without needing to physically move the data.

Why is a RAG architecture so critical for enterprise AI? RAG (Retrieval-Augmented Generation) allows GenAI applications to securely retrieve proprietary, up-to-date company data to answer questions. This highly reduces hallucinations and provides accurate answers without the need to expose your private data to train public foundation models.

How can Small and Medium Businesses (SMBs) build an AI data pipeline on a tight budget? SMBs can achieve cost-efficiency by utilizing open-source data orchestration tools, leveraging cheap cloud object storage for their Bronze data layers, and utilizing serverless Vector Databases that only charge based on the exact amount of data queried or indexed.