Mastering Enterprise Monorepos using Cursor Composer 2 and Kimi Model

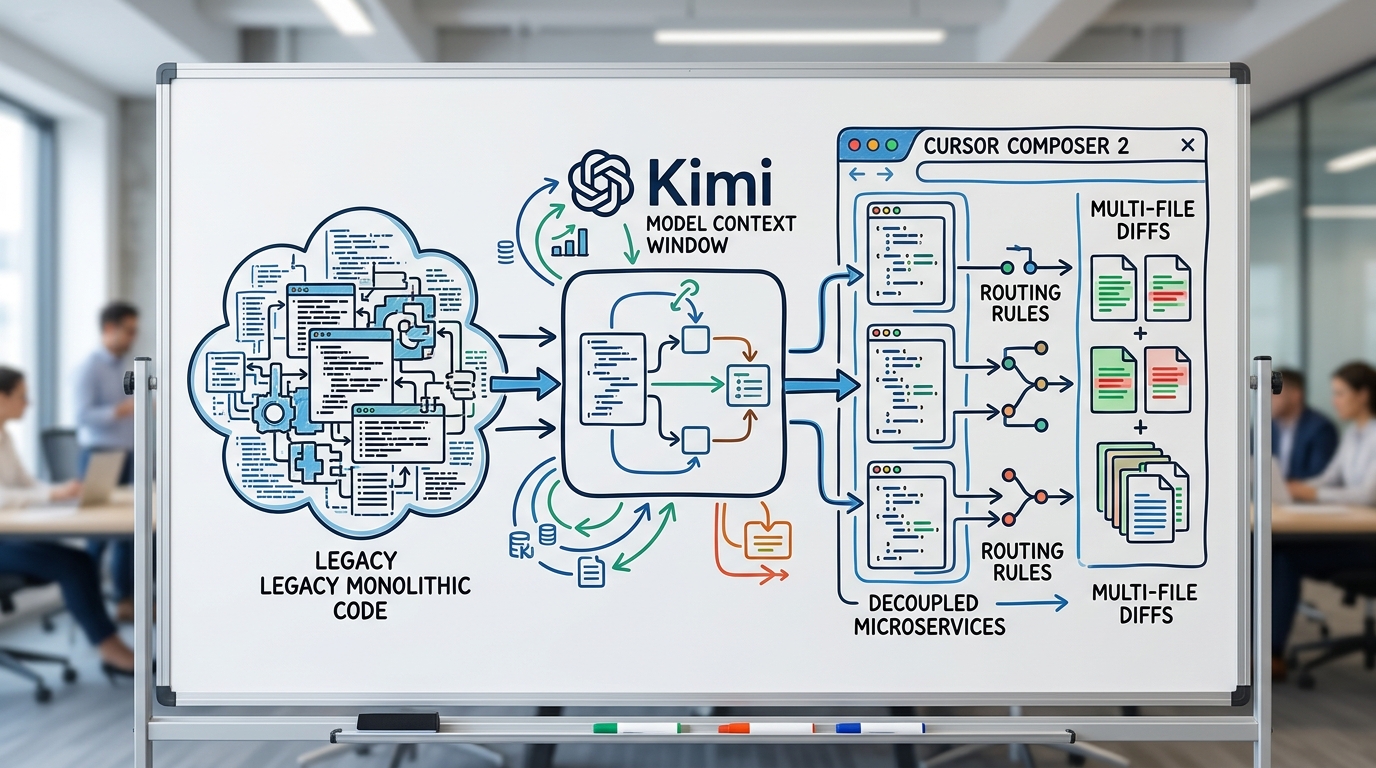

Discover how Thai enterprise development teams can tackle legacy tech debt and refactor massive monorepos using Cursor Composer 2 with Kimi model for limitless context window management.

iReadCustomer Team

Author

Why Thai Enterprises Need Long Context LLM Coding

Integrating AI into software development is no longer a novelty. Most development teams are well-acquainted with utilizing tools like GitHub Copilot or ChatGPT to generate boilerplate or micro-functions. However, when it comes to refactoring enterprise monorepos, developers hit a massive roadblock: the "Context Window Limit."

Standard LLMs typically cap out around 128k to 200k tokens. While this suffices for reading a few dozen files, enterprise projects are riddled with complex dependencies, sprawling configuration files, database schemas, and fragmented business logic. Pushing standard models to these limits often results in the "lost in the middle" phenomenon—the AI forgets crucial definitions situated in the middle of the prompt, resulting in generated code that fails to integrate seamlessly with the existing system.

AI in software development The advent of long context LLM coding serves as the missing puzzle piece. It empowers engineering teams to feed entire repository directories into the AI to accurately map the Abstract Syntax Tree (AST). This drastically reduces the burden on Senior Developers who would otherwise spend grueling hours conducting manual impact analysis before a major refactor.

Deep Dive: Cursor Composer 2 with Kimi Model Capabilities

The synergy between these two cutting-edge technologies creates a new workflow known as Agentic Software Engineering. It moves beyond line-by-line autocomplete, allowing the AI to act as an autonomous software architect.

The Long Context Advantage of Moonshot's Kimi

Kimi, a sophisticated model developed via Moonshot AI integration, stands out significantly due to its massive context window and exceptionally high retrieval accuracy. While other models might rely heavily on RAG (Retrieval-Augmented Generation)—which risks missing crucial yet subtly referenced files—Kimi is capable of "Full-context Loading." This means it perfectly understands cross-file function calls, intricate dependency injections, and even the specific, nuanced coding conventions unique to your engineering team.

Agentic Multi-File Orchestration in Composer 2

Cursor's latest iteration introduced Composer 2, completely overhauling the UI and UX for AI-assisted coding. Instead of chatting with an AI via a side panel, Composer 2 acts as a central control plane. By pressing Ctrl+I or Cmd+I, developers summon a command interface where the AI calculates and proposes code diffs across multiple files simultaneously. You can inline-accept or reject changes file by file. Paired with Kimi's bird's-eye view of the repository, sweeping architectural migrations or system-wide API version updates that used to take days can now be orchestrated in minutes.

Practical Workflow: Refactoring Enterprise Monorepos

To contextualize this, consider a scenario where a Thai e-commerce development team needs to migrate a monolithic cart calculation module into a cleanly decoupled microservice.

Step 1: Codebase Indexing and Context Selection

Even though Kimi handles massive context payloads effortlessly, the best practice when utilizing Cursor Composer 2 with Kimi model is to use the @Codebase feature alongside specific folder targeting. You can initiate the context phase by typing:

@src/legacy/cart @src/models/product @src/utils/tax_calculator

This command instantly ingests all pertinent logic into Kimi's context window without losing granular detail or wasting tokens on irrelevant front-end assets.

Step 2: Designing the Prompt for Multi-File Refactoring

Writing prompts for enterprise-scale tasks requires an architecture-driven approach. For instance:

"Analyze the referenced cart calculation logic. Extract the discount calculation and tax logic into a new standalone Next.js service module under

/src/services/cart. Update all existing references in the UI components to use the new service via async API calls. Ensure TypeScript interfaces forCartItemare strictly typed and shared in/src/types/index.ts."

Step 3: Reviewing and Approving Composer Diffs

Cursor Composer 2 leverages Kimi to execute a Chain of Thought plan. It then autonomously creates new files, deletes redundant legacy code, and injects updated paths in affected components. Developers review inline diffs in real-time. Because Kimi retains flawless context memory, amateur errors like "incorrect import paths" or "forgotten variable updates in distant files" are virtually eliminated.

Cost-to-Performance and Security Considerations

A primary concern for massive organizations is data security. securing enterprise codebases Transmitting millions of tokens of proprietary source code to external models necessitates rigorous compliance with Data Privacy Policies. Fortunately, Cursor offers a strict Privacy Mode, ensuring that your codebase is never utilized to train future models.

From a tokenomics perspective, executing refactoring enterprise monorepos via flagship long-context models incurs a higher per-prompt API cost. However, when contrasted against the human capital (man-hours) required for a team of engineers to execute manual refactoring—often stretching across weeks or months—the ROI generated by Kimi's precision is undeniably superior.

Conclusion

Breaking through the traditional boundaries of legacy codebase management is no longer an insurmountable feat. Adopting Cursor Composer 2 with Kimi model as the backbone for long context LLM coding tangibly resolves enterprise software development bottlenecks. For tech teams in Thailand, this means effectively conquering technical debt, ensuring codebase stability, and radically accelerating time-to-market for modern digital features.

FAQ

How does Cursor Composer 2 differ from GitHub Copilot?

Composer 2 is designed as an agentic tool for multi-file orchestration. It can analyze, propose changes, generate new files, and restructure an entire project simultaneously. In contrast, standard Copilot primarily excels at predictive line-by-line autocomplete within an active file.

Is the Kimi model secure enough for banking or large enterprise source code?

When using Cursor on Pro or Enterprise tiers, Privacy Mode can be enforced. This guarantees your codebase is not stored or used for further model training. However, organizations should always evaluate external LLM integration against their internal Data Governance policies.

How API-intensive is Long Context LLM Coding?

Because the context windows are exceptionally large, the token consumption per inference is high, leading to increased costs per prompt. It is recommended to use scoping tools like @Codebase or specifically reference target directories to optimize context relevance and maintain cost efficiency.