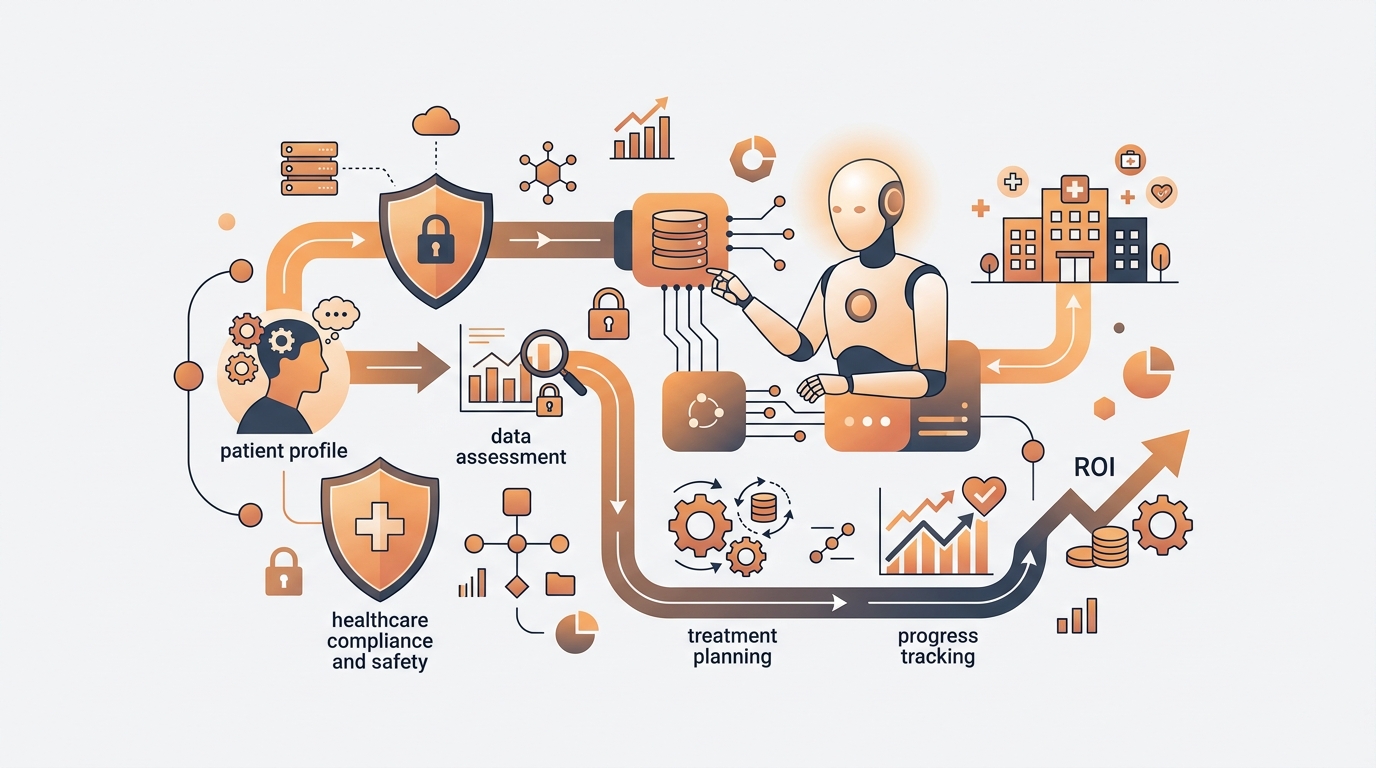

How to Build a Safe AI Mental Health Workflow Implementation That Drives ROI

Clinics lose thousands and risk compliance when deploying AI poorly. Learn how to build a mental health AI workflow that protects patient data, escalates crises instantly, and recovers clinical hours.

iReadCustomer Team

Author

Last October, a regional behavioral health network in Ohio missed 412 late-night patient inquiries because their on-call triage system relied entirely on two exhausted nurses. Building an ai mental health workflow implementation is not about chasing the latest tech trend; it is about plugging a massive operational leak while fundamentally improving the standard of care for your patients.

The Hidden Cost of Broken Mental Health Triage

An inefficient triage system is the primary reason clinics lose revenue and burn out their clinical staff. It forces highly paid licensed professionals to spend hours doing redundant paperwork and leaves desperate patients waiting overnight for basic answers. Forcing a trained psychologist to field basic questions about clinic hours or insurance coverage is the most expensive misuse of resources in your organization.

The American Psychiatric Association reports that clinics lose tens of thousands of dollars annually from cases dropped out of hours. Clinical staff spend nearly 30% of their working day categorizing new patient symptoms instead of delivering actual therapy. This compounding administrative fatigue is driving the massive spike in healthcare turnover rates we see today.

Replacing a departing licensed therapist costs a clinic an average of $40,000 in recruiting, onboarding, and lost productivity. Trying to fix this by simply hiring more night-shift administrative staff is not a viable ROI play, because the volume of midnight inquiries is highly unpredictable.

The Midnight Crisis Gap

The hours between 2 AM and 5 AM are when mental health patients are most vulnerable, yet this is exactly when clinics have the fewest resources available. Leaving these patients to face generic "we are closed" autoresponders creates a sense of isolation and introduces massive risk.

The anatomy of out-of-hours operational failure:

- 45% of new patients abandon their appointment intent if they do not receive a response within an hour.

- Clinical teams waste the first two hours of every morning clearing the backlog of overnight messages.

- Clinics face severe legal risk if a patient sends a crisis message that goes unseen until 8 AM.

- Management burns budget paying overtime to triage nurses on nights with zero high-acuity cases.

- Competitors leveraging automated intake systems easily capture patients seeking immediate direction.

The Burnout Toll on Licensed Professionals

Doctors and therapists did not endure years of schooling to operate as glorified call center agents. Forcing them to handle repetitive intake inquiries is a guaranteed recipe for burnout that clinic owners completely underestimate.

- Therapists spend an average of 15 minutes per case just confirming basic medical history.

- Context-switching between deep clinical therapy and shallow administrative tasks shatters focus.

- Job satisfaction drops by 40% when licensed professionals are forced to handle after-hours triage.

- Patients feel rushed during actual sessions because the provider's schedule is packed with admin delays.

- The opportunity cost of lost billable therapy hours creates a massive bottleneck for clinic growth.

Why AI Mental Health Workflow Implementation Requires Strict Governance

AI in behavioral health is a routing engine, not a clinical provider. It requires strict governance because delivering accidental clinical advice creates massive legal liability that can shut down a practice overnight.

Many clinic operators fail by deploying off-the-shelf customer service chatbots to interact with patients, failing to realize that health data is vastly different from retail data. Frameworks like HIPAA in the US or GDPR in Europe enforce crippling financial penalties if patient history is mishandled. You must architect the system so the AI operates strictly as an "administrative assistant" that never crosses professional boundaries.

Legal risk drops to near zero the moment you hardcode your software to strictly refuse medical advice. The safest AI system is one that knows exactly how to politely say "no" and immediately escalate the conversation to a human.

Defining the "Not Medical Advice" Boundaries

Standard generative bots are programmed to be helpful conversationalists, which is incredibly dangerous in a behavioral health context. A standard bot might hallucinate coping mechanisms or improperly suggest medication adjustments.

Mandatory guardrails for your ai mental health workflow implementation:

- Display an explicit disclaimer before the chat begins stating this is a triage system, not a doctor.

- Block any vocabulary related to prescribing, dosage adjustments, or clinical diagnoses.

- Force the AI to respond to symptom inquiries using only pre-approved, static clinical templates.

- Prohibit the AI from attempting to summarize the root cause of a patient's emotional distress.

- Maintain an immutable audit trail of every interaction for legal compliance.

Privacy and Consent Frameworks

Mental health data is highly sensitive personally identifiable information (PII). You cannot simply pipe patient messages into a public AI model without explicit consent and robust encryption.

- Patients must click to provide explicit consent acknowledging they are speaking with an automated system.

- All data transit and storage endpoints must utilize enterprise-grade encryption.

- Access to conversation histories must be strictly role-based (only assigned clinicians and senior admins).

- Implement automatic PII stripping (removing names, phone numbers) before data hits the processing model.

- Provide a one-click mechanism for patients to request complete deletion of their conversation history.

Mapping the Patient Journey for AI Integration

Data readiness determines if your AI acts as a helpful assistant or a dangerous liability. Clean, structured historical data allows the system to accurately categorize patient needs and route them without human intervention.

Most clinics have patient data scattered across paper forms, legacy spreadsheets, and outdated Health Information Systems (HIS). Layering AI over a chaotic internal process simply scales the chaos faster. You must first map the patient journey, tracking every step from the initial chat ping to the final payment.

A successful workflow map identifies exactly which touchpoints are safe for automation and which require a human touch. If your clinic cannot list its patient intake steps on a whiteboard, you are not ready to integrate AI tools of any kind.

Assessing Your Data Readiness

AI requires clean, structured training data to understand the specific context of your clinic. It needs to know how you name your therapy packages and the specific schedules of your providers.

The mental health data readiness checklist:

- Export 6 months of historical chat/email logs to identify the top 20 most frequent inquiries.

- Categorize these historical questions strictly into admin, basic triage, and crisis buckets.

- Build an internal knowledge base of answers approved by your clinical director.

- Cleanse the training data by redacting all legacy patient names and identifying details.

- Format your provider schedules and service lists into easily queryable API formats.

Identifying Safe Service Touchpoints

Not every step of the patient journey should be automated. You must isolate administrative bottlenecks that carry zero clinical risk and automate those first.

- Answering FAQs about operating hours, parking directions, and accepted insurance networks.

- Administering standardized baseline mental health assessments (like PHQ-9) prior to the first visit.

- Sending automated appointment reminders and collecting confirmation replies.

- Distributing post-session satisfaction surveys and routine follow-up check-ins.

- Collecting basic demographic information and known medication allergies.

Tool Selection and Integration Choices for Clinics

Choosing the right AI integration means prioritizing HIPAA-compliant architecture over raw conversational power. Off-the-shelf tools leak patient data to train future models, whereas specialized healthcare platforms silo your data securely.

The market currently offers three main categories: General LLMs, Healthcare-grade AI platforms, and Rule-based triage bots. Selecting the correct tier saves your clinic thousands in unnecessary software bloat and prevents massive compliance headaches down the road.

Successful operators blend Healthcare-grade AI with their existing Electronic Health Records (EHR). Enterprise solutions like the Zendesk HIPAA module or dedicated clinical tools like Wysa and Eleos Health are infinitely safer than connecting a raw public API directly to your website.

| Feature | Rule-based Bots | Healthcare-grade AI | General LLMs (Public) |

|---|---|---|---|

| Data Privacy | Extremely High (Data stays on-premise) | High (Meets healthcare compliance standards) | Low (High risk of model-training leakage) |

| Contextual Understanding | Low (Relies on exact keyword matches) | Medium-High (Trained on clinical contexts) | Very High (Often hallucinates) |

| Implementation Cost | Low (Starts ~$150/mo) | High (Starts ~$1,000/mo) | Variable (Pay per token/usage) |

| Risk of Bad Medical Advice | Zero (Only uses static scripts) | Low (Built-in clinical guardrails) | Extreme (Requires custom prompt engineering) |

| Best For | Small clinics, pure admin tasks | Mid-to-large clinics, intake triage | Internal back-office research only |

Building Robust Crisis Escalation Protocols

A crisis escalation protocol acts as the mandatory emergency brake in every behavioral health AI system. It immediately transfers control to a human when a patient types a high-risk phrase, ensuring technology never stands in the way of life-saving intervention.

Deploying AI does not mean you fire your triage staff; it means your staff now only handles actual emergencies. When a patient signals severe distress, the system must instantly realize this is no longer an administrative workflow and execute a hard hand-off to a licensed professional.

Designing a frictionless hand-off is the single most critical engineering task in this setup. If a patient types "I want to end it" and your bot replies "Please wait 1 business day," your clinic faces catastrophic liability.

Keyword Triggers for Immediate Handover

The system must constantly scan incoming text for specific vocabulary or contextual cues that indicate risk (active vs passive ideation).

- Direct vocabulary triggers: e.g., "suicide", "hurt myself", "end it", "can't take it".

- Passive contextual triggers: e.g., "nobody cares", "want to disappear", "goodbye letters".

- Action: The AI immediately suspends all generative text generation.

- Action: The system outputs a pre-approved static message containing a national crisis hotline number.

- Action: The platform fires an urgent SMS/Slack webhook to the on-call triage nurse.

- Action: If the nurse fails to acknowledge the alert within 2 minutes, it auto-dials the clinical director.

Maintaining Licensed Professional Oversight

The software must operate entirely under the license and supervision of a qualified medical professional to maintain care standards.

- The Lead Psychiatrist must manually sign off on the initial knowledge base the AI uses to reply.

- Human staff must conduct a random audit of 20 AI chat logs every single week.

- Administrative staff must monitor a real-time dashboard for stalled or looping bot conversations.

- The clinical team holds a monthly review to update the trigger keywords based on new patient slang.

- The system includes a frictionless feedback loop letting patients flag an unhelpful bot interaction.

The 30/60/90-Day Healthcare AI Implementation Plan

The safest healthcare ai 30 60 90 plan treats the first month as a silent shadow phase, completely hidden from patients. It minimizes risk by training the system on historical data and internal testing before ever allowing it to interact with the public.

Rushing an AI launch in a single week ends in chaos. Your clinical team will reject the tool because it creates errors, and patients will complain about poor service. Staging the rollout ensures high adoption rates and gives your team time to trust the technology.

Leadership must clearly communicate that AI is here to kill paperwork, not jobs. The key to adoption is involving your frontline triage nurses in training the AI from day one, giving them total ownership of the tool.

- Days 1-30 (The Shadow Phase): Connect the AI to your historical logs. Feed it past conversations and watch how it categorizes them without letting it talk to patients. Your admins correct its mistakes daily until it achieves a 95% routing accuracy rate.

- Days 31-60 (The Admin Rollout): Turn the AI on for live patients, but restrict it entirely to administrative queries (hours, insurance, parking). If a patient mentions symptoms, the AI immediately routes them to a human. Gather patient satisfaction scores.

- Days 61-90 (Full Triage Activation): Enable the AI to conduct baseline clinical assessments (PHQ-9/GAD-7). Let it evaluate severity and auto-schedule patients directly into the provider's calendar, fully activating the crisis escalation webhooks.

Measuring ROI Metrics Without Compromising Care

True ROI in clinical AI comes from recovered clinical hours, not from reducing headcounts. It saves money by handling the administrative burden that currently burns out your top earners, allowing them to see more patients with less fatigue.

Targeting headcount reduction as your primary success metric is a dangerous mindset that degrades care quality. Instead, measure how many additional billable therapy sessions your clinic can execute per week now that providers are free from triage duties.

Tracking the right chatbots vs human therapists roi metrics proves the value of the investment to your stakeholders. If an automated workflow saves your clinic $5,000 a month but frustrates your patients into switching providers, the investment has fundamentally failed.

Operational Cost Savings

These are the hard dollar figures your CFO or clinic manager needs to report at the end of the quarter.

- Increase in total monthly billable hours recovered from licensed therapists.

- Reduction in overtime wages previously paid to night-shift triage nurses.

- A 40% decrease in overall inbound phone call volume within the first three months.

- Reduction in Average Time to First Response from 2 hours down to under 1 minute.

- Decrease in insurance claim rejections because the AI collected perfect billing data upfront.

Patient Engagement Improvements

Patient-side metrics prove that the automation has not destroyed the human element of your practice.

- Higher completion rates for pre-consultation assessment forms.

- Reduction in patient no-show rates due to reliable, automated SMS reminders.

- A 60% increase in converting after-hours website visitors into booked appointments.

- Customer Satisfaction (CSAT) scores remaining equal to or higher than human-only benchmarks.

- Lower drop-off rates during the initial inquiry chat phase.

The Top AI Mental Health Support Mistakes Clinics Make

The most expensive ai mental health support mistakes stem from treating patients like retail consumers. Clinics fail when they deploy empathetic-sounding bots that lack explicit clinical boundaries, confusing patients and creating immense legal liability.

Mental health patients are seeking clarity and professional direction, not a digital friend. Programming an AI to be overly sweet or agreeable tricks the patient into thinking they are speaking to a therapist, prompting them to unload deep trauma that the bot is fundamentally unequipped to handle.

Preventing these errors starts with brutally honest user experience (UX) design. Transparency is the foundation of patient trust; tricking a patient into thinking a bot is human will destroy your clinic's reputation permanently.

Launching Without Human Fallback

This is the catastrophic error that leads directly to malpractice lawsuits.

- Deploying an after-hours bot without an emergency hotline prominently pinned to the screen.

- Allowing the bot to trap patients in an infinite loop when it fails to understand their request.

- Hiding the "speak to a human" button deep in a menu, causing patient frustration.

- Failing to set up dashboard alerts for admins when a bot conversation stalls or fails.

- Letting the bot confirm appointments without a real-time data sync to the provider's calendar.

Ignoring Local Privacy Laws

Taking shortcuts with cheap or free AI tools will result in devastating regulatory fines.

- Piping patient data into standard ChatGPT without signing a Business Associate Agreement (BAA).

- Storing patient mental health histories inside generic marketing CRMs (like unconfigured Hubspot).

- Skipping the mandatory explicit consent opt-in before processing health queries.

- Transmitting unencrypted patient names and symptoms to offshore data servers.

- Failing to establish an automated Data Retention Policy that deletes logs when a patient leaves the practice.

Next Steps for Your AI Mental Health Workflow Implementation

Successful ai mental health workflow implementation requires treating the technology as an administrative assistant, not a clinical provider. It protects your medical license while expanding your clinic's capacity to serve patients sustainably.

When you build a system that funnels patients into the correct pathways, mitigates risk with unbreakable escalation protocols, and fiercely guards privacy, the bottlenecks that frustrate your staff disappear. Your clinic will safely handle a higher volume of inquiries without forcing a single therapist to work late into the night.

Your mandate for tomorrow morning is clear: schedule a 30-minute meeting with your lead triage nurse and your clinic manager. Ask them to list the 20 most repetitive questions they answered this week and map out exactly how they handle a midnight crisis text today. That simple whiteboard list is the foundational blueprint for an AI system that actually works.